Hi everyone, i am relatively new to the Sentinel 3 dataset and i am starting to work with the GPT app ( and its amazing for geoprocessing ), so its highly likely that i am not processing the data properly.

I want to use Sentinel 3 data for a Data Science Project involving crop monitoring, i downloaded 3 different images of south-west Uruguay:

1.S3A_OL_1_EFR____20201118T130641_20201118T130941_20201118T143941_0179_065_152_3600_LN1_O_NR_002.SEN3

2.S3B_OL_1_EFR____20201104T133054_20201104T133354_20201104T150406_0179_045_195_3600_LN1_O_NR_002.SEN3

3.S3B_OL_1_EFR____20201105T130444_20201105T130744_20201105T143840_0179_045_209_3600_LN1_O_NR_002.SEN3

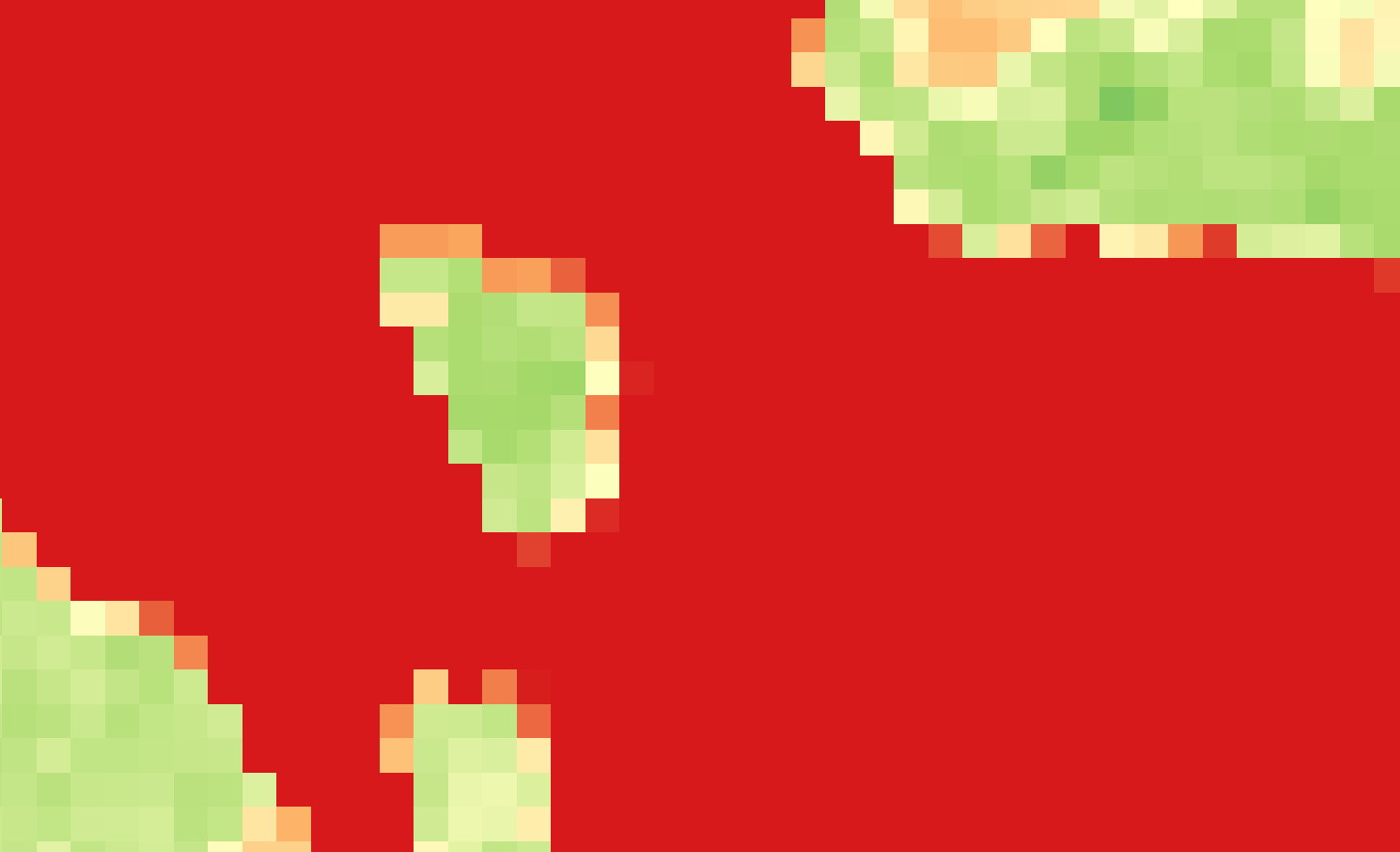

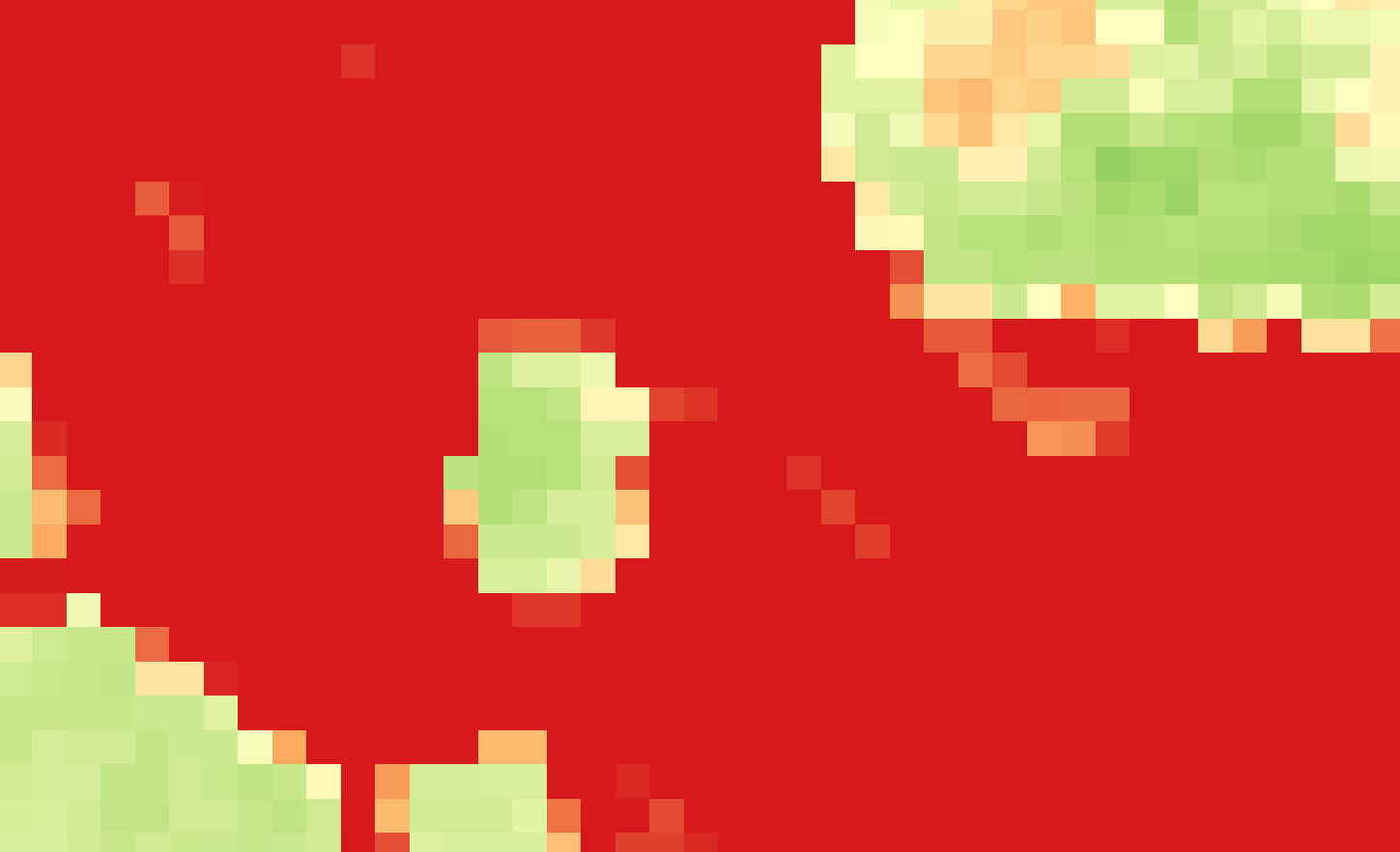

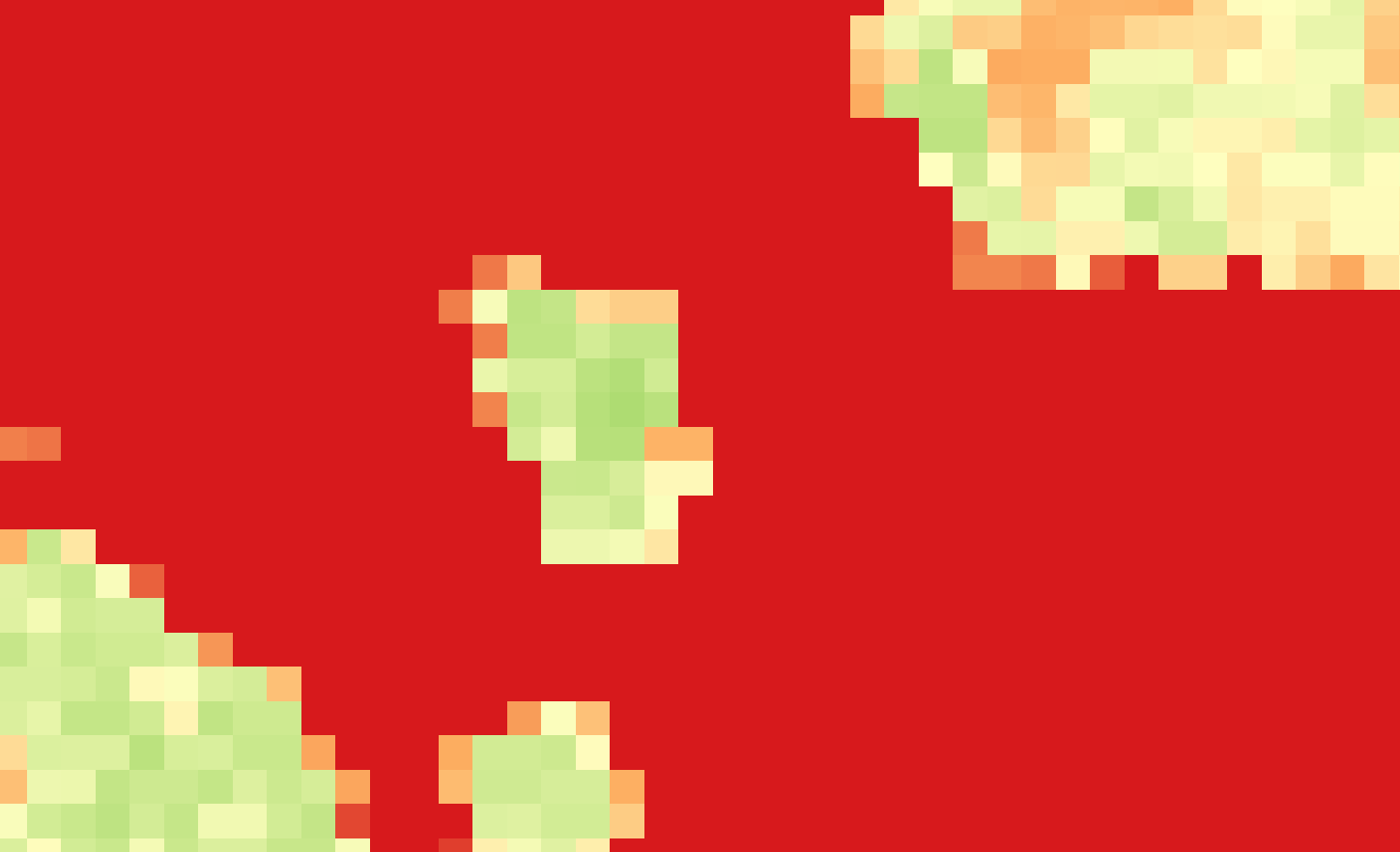

But when i was going to start to create the data model for ML training i found that the images were dislocated from each other, the 2020-11-18 image seems better adjusted, but the ones from 2020-11-04 and 2020-11-05 were dislocated with errors of more than 300 meters in some cases, the problem is that with such dislocation i will not be able to make a viable data processing, i understand that NRT products might not be 100% accurate ( and perhaps i should be using another product ) but i wanted to ask if i was miss-handling the raster data.

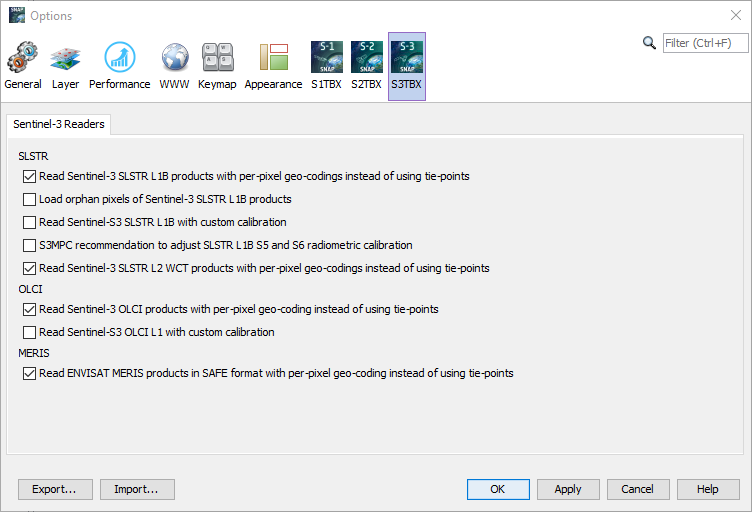

are this kind of dislocations expected for S3 NRT? or there is something i am doing wrong, i attach the script i am using, and also some QGIS pictures that shows the level of dislocations i found, I am in doubt if this is a linear interpolation problem, but perhaps someone with better experience in S3 data processing might know better, any tips in how i can properly process this datasets?

convert_rad_ref.xml (3.2 KB)