Yes, but even at 16GB making very big graphs should be avoided.

Please help me in solving this error.

Solving the error for you is not possible without complete information of your situation. Did you try to get it to work as a graph first?

Please have a look at the original post. I’ve answered there.

Btw, please don’t ask the same question in two threads. One time is usually enough.

Hello, I have the same problem. How can I solve it?

please go through the solutions proposed above or ask more specificly.

Hi. I’m facing the same problem. I’m working with very large COSMO-SkyMed Himage, and I want to do a multi-temporal speckle filter using my 6 images. Each image has about 1.8 Gb. I’m running in a 32 Gb RAM server, and it doesn’t seem to use more than 20 Gb. I tried to stack one by one but got the ‘Cannot construct DataBuffer’ at the 4th.

I also tried to save the output to BigTIFF.

I’m using the SNAP GUI where I already set to 32 Gb in the Performace options.

Do you have any idea what else could be the cause?

Thanks for any help.

I solved java heap, data buffer memory errors in S1TBX (S1 TOPS) with 32 gig of memory and set SNAP cache size to 16384 (the larger of 3 benchmark test values) in Tools>Options>Performance in 64 bit variety of SNAP. Seems to have the same effect as editing -Xmx string in snap/etc/snap.conf.

Still, after memory intensive operations, it serves to save the product and exit SNAP (to clear the memory?). Must save the processing product or it is lost on exit. More memory is better.

Continuing the discussion from Error: Cannot construct DataBuffer:

Hi!

I am working from the computer in my university. The computer has 16 GB RAM.

I am trying to deburst (Radar > Sentinel 1 TOPS > Sentinel 1 TOPS Deburst) the interferogram (.ifg file) but the software says that it cannot construct DataBuffer. I read other comments on your the forum but did not understand anything.

Can you help me?

have you already adjusted the -Xmx parameter in \snap\etc\snap.conf?

This error pops up often, we will need to add this to the FAQ and prepare a guide for JVM parameter adjustment. @obarrilero

It is already in the FAQ (I think @marpet added it), but not yet published:

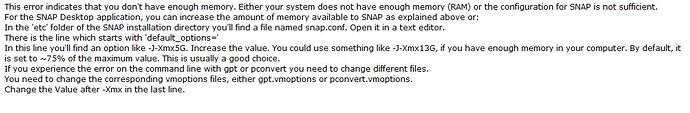

I’m getting the Error “Cannot construct DataBuffer”. What can I do?

"This error indicates that you don’t have enough memory. Either your system does not have enough memory (RAM) or the configuration for SNAP is not sufficient.

For the SNAP Desktop application, you can increase the amount of memory available to SNAP.

In the ‘etc’ folder of the SNAP installation directory you’ll find a file named snap.conf. Open it in a text editor.

There is the line which starts with ‘default_options=’

In this line you’ll find an option like -J-Xmx5G . Increase the value. You could use something like -J-Xmx13G , if you have enough memory in your computer. By default, it is set to ~75% of the maximum value. This is usually a good choice.

If you experience the error on the command line with gpt or pconvert you need to change different files.

You need to change the corresponding vmoptions files, either gpt.vmoptions or pconvert.vmoptions.

Change the Value after -Xmx in the last line. "

I have just created a new JIRA issue in order to improve the help of the performance parameters in SNAP: https://senbox.atlassian.net/browse/SNAP-1061

Hi!

Yes we changed the number in - Xmx from 11g to 25g and I got the result.

Thank you for help!

I had the same data buffer issue trying to use snaphu import of a full unwrapped subswath with 32 gb of RAM. I tried every combination of increasing cache size of xmx I could think of.

What worked for me was to disable caching by setting the cache size to 0 in the performance options.

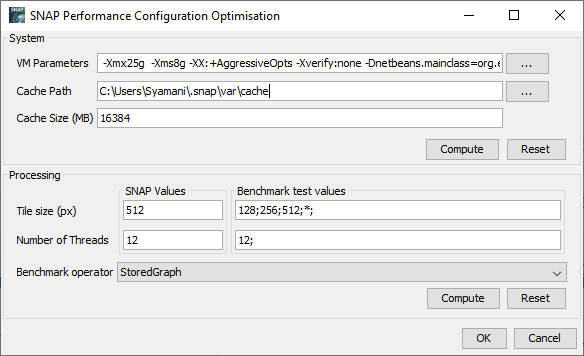

The same thing happened to me. Even though the physical memory of my laptop is 32 GB. Then I changed the settings on the SNAP Configuration Optimiser as shown in the picture.

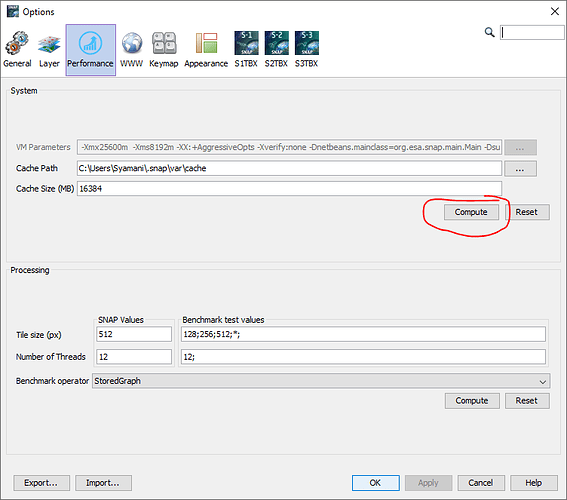

Then in the SNAP application, click Tools - Options - Performance, and click the Compute button as shown.

It worked for me.

If your RAM is 64G, then how much RAM can we put for Xmx? is there a way to know that?

And,

Did you also change the catch size?

Thanks.

Some users reported 50% was ideal for them, others used 75%.

So you can try both 32G and 48G, and see which makes best use of your hardware in terms if performance.

yes thanks.