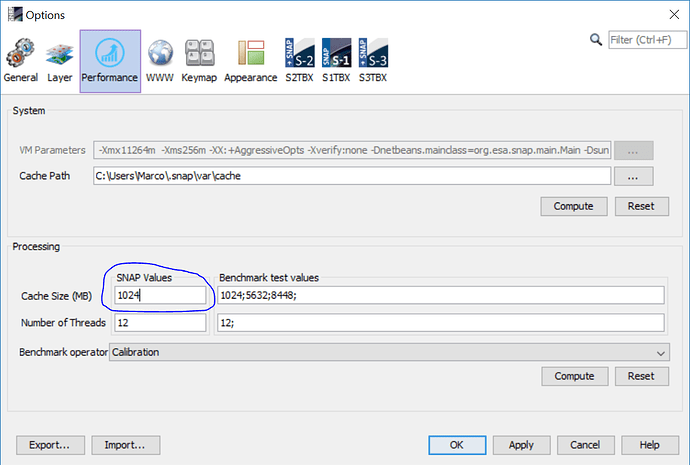

If you want to process such an amount it is good to change the tile cache size. It is separate from the overall memory SNAP can use. For the desktop application, you can change the cache in the options panel (Tools / Options in the menu).

If you use gpt from the command line you can use the -c option to configure cache size.

A good value is ~75% of the overall memory for SNAP/gpt. For gpt you can change this in the gpt.vmoptions file. Change the Value after -Xmx in the last line.