So now it works? Cool !!

Let me know. Thanks

how is the MASTER to be defined in project.conf?

I added the full path to the split product but nothing happens and the command shell returns to enter mode.

If I enter anything else I get the message “split product is expected”.

I really wonder why everything worked like charm with my first dataset and now there is this strange behaviour…

Indeed I am still wondering what you have changed from one processing to the other as the software that you are using is always the same.

the MASTER variable in the project.conf must be the full path to the splitted and orbit corrected master (processed with SNAP) in BEAM-DIMAP format.

In case you might need it, you can give me more info about the dataset and parameters so I can try to reproduce your problem. Or we can see it together

Let me know

thank you. So this is simply the outcome of splitting_slaves.py, right?

Not really! For the MASTER… as written in the SNAP2StaMPS_User_Manual.pdf I suggest to do it in GUI, ensuring that the selected burst cover the entire AOI (as with the splitting_slaves.py you get entire subswath (that could be also used, but then the processing time needed is much higher than selecting few burst only for the MASTER)

I hope this helps.

Keep me updated

I see, thank you. This didn’t become clear to me when reading the manual, to be honest. What could be helpful would be a flow chart of the order of all included steps so the reader has an idea of what is to come.

However, simply applying all scripts technically worked the first time I used it. Probably because it was only one burst.

I will try it as you suggested.

Thanks for the feedback!

In fact it is good to get real users’ feedback so I can improve the manual for next release.

You are right and there is nothing wrong with just applying the scripts, but full swath interferograms takes much more time if the AOI can fit in smaller area to process.

Full swath should work as well…there is no limitation for that, maybe memory allocation should be better consider while configuring SNAP I guess.

Let me know

so the extent of the master image defines how much of the slaves will be used for the coregistration and interferogram formation process?

Not exactly, but the time and space needed for processing increases with the size. Let’s say that 2 burst MASTER takes on my 8vCPUs and 32GB RAM only between 2-3 mins. Full swath can take about/over 1 hour.

Internally I do not know if SNAP is able to get directly only the slave burst(s) matching the master extent. Maybe somebody can reply to this question (@marpet)???

now I get it, thank you!

I don’t know much about SAR processing. I hardly know what bursts are ![]()

So, I can’t answer if this is possible. Maybe @lveci or @jun_lu can answer this.

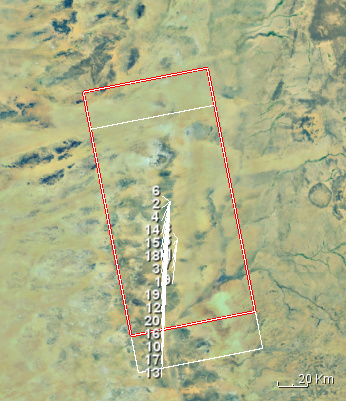

is it a problem when the bursts are shifted along-track?

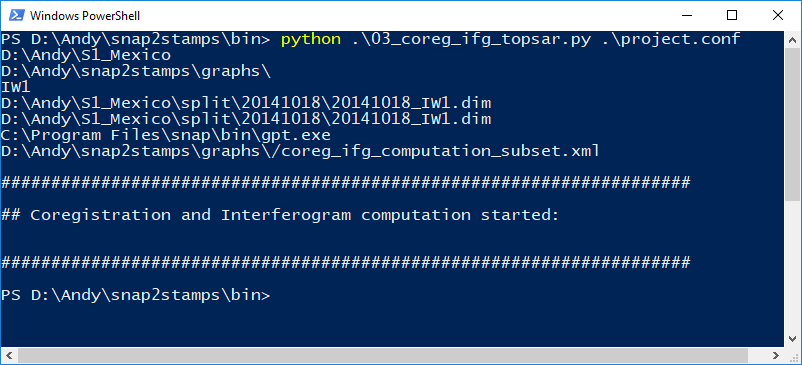

I get the message at step three, coregistration:

Error: [NodeId: Back-Geocoding] Same sub-swath is expected.

Hi @ABraun,

Actually I do not think so. Just ensure that your data covers your AOI.

What you shows it is an error message from the SNAP Back-Geocoding Operator and not from the snap2stamps scripts. But from the image I think that one of more your slaves images do not cover the entire master, and probably, there are burst in the master with no correspondence with your slaves.

Can you check that? If so, could you put the slave image with the missed burst in the slave folder to be assembled?

In addition, if you could check the content of the coreg_ifg2run.xml produced and used by SNAP we can have a better overview of what it is happening.

now I feel dumb…

I had written the wrong IW in project.conf and therefore, all slaves were outside the master.

Things like that happens to everybody. It is fine to keep checking the scripts to verify that everything works.

In such case just need to correct it in the project.conf and launch the commands. Snap2stamps will do the rest for you!

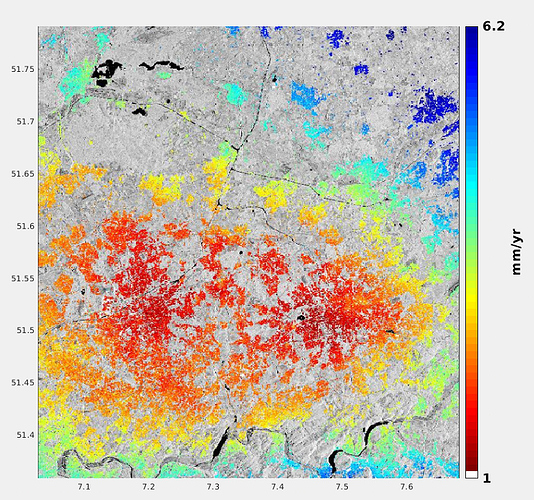

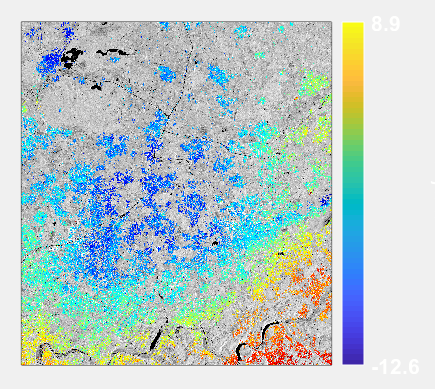

I have completed the analysis for the Ruhr area in Western Germany which heavily sinks due to mining activities.

I used 36 Sentinel-1 images which produced over 400000 PS. After strong weeding (std=0.5) 150000 remained which give a nice picture of the pattern. The only strange thing is that the values and the colour ramps are inverted. The largest displacement should actually be in the middle of the image.

But this is rather a StaMPS configuration issue and not related to the preprocessing, this works very well - congratulations and thanks again!

This is ps_plot(‘vs’,4)

Thanks @ABraun for sharing with us the results and for testing snap2stamps ! ![]()

Just note that you have plotted the standard deviation of velocities ps_plot(‘vs’) and not the LOS deformation velocities ps_plot(‘v-d’) (minus DEM error). Maybe that plot is more likely what you are looking for.

Really glad to help users in making easier PSI using SNAP and StaMPS.

Still many things to be done and improved! ![]()

you were right…

You see, I didn’t come to the plotting part very often. But this will change in the future, now that your tool helps me to get through more quickly.

looks better!

I’m testing again at another site and found that the lat/lon bands and the dem bands are written for every coregistered pair. Probably because it was easier to implement, but it could potentially save a considerable amount of disk space (and a little computing time) if this was done only once.

Just a thought, you probably have your reasons.

The dem band is needed in the StaMPS export operator (I believe it is the same for lat/lon but I am not sure as in previous version of the operator it was not). Maybe @marpet can say something more precise than me in that regard.

Indeed having dem and lat/lon in all the pairs is wasted time and disk space.

We could think in a solution for that in the python scripts to remove non-necesary data.

Thanks for the comment! Indeed I should start thinking in optimizing and improving them.