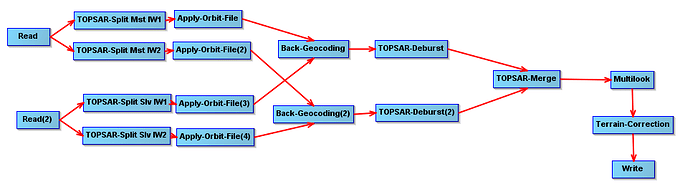

I’m trying to look at a long (>1 yr) time series of S1 IW SLC intensities for a somewhat funky area that combines one IW1 burst with three adjacent IW2 bursts. I’d love to do this in batch mode, back-geocoding everything to a single master. Here’s the workflow:

I realize this isn’t interferometry so I may not absolutely need to back geocode in the slant plane, but it feels better to do so. And I’m only making life harder on myself by combining one IW1 burst with three IW2 bursts in a single graph, but again, I’d rather not split things up if possible.

Having read various threads here and tried various things, it looked like the batch mode aspect just wasn’t going to work, as I couldn’t control the master - I couldn’t tell SNAP to keep a certain collect constant as the master while using all the rest of the input files as slaves. (Hate that terminology, by the way - can we switch over to “reference” and “secondary”?) Reading other threads, it seems possible to do this using StaMPS, but I’d rather not dive into command-line processing if I can help it.

Then I noticed a pattern in the way batch mode seemed to be reading and processing the files - it seems that the first Read node in the graph’s xml file is used to read the next file in the Batch Processing I/O list, in top-to-bottom order. The second Read node, however doesn’t seem to work that way. Note that I mean “the first Read node” being the first read node you encounter as you read from the beginning of the graph’s xml file - NOT necessarily the node labeled “Read” in the upper left of the graph’s visual display.

So if you use the second Read node to read the Master (which should NOT be included in the Batch Processing I/O list!) and the first Read node to read the secondaries (that ARE included in the I/O list)… it seems to work. The batch job ran without problems, although I believe it terminated early (after only 13 of the desired 49 secondaries processed, which I think/hope was a memory issue). All of resulting products seem to get written to the same output directory (whether I check “keep source product name” in the Write node or not), which means the Master gets overwritten on every pass through the Batch job, but that’s not a problem. The product .dim file loses track of which secondaries have been created, but the .hdr and .img files for all of the secondaries are still stored in the output .data folder, ready to be dragged into the Product Explorer window (or read into Matlab).

So I’m feeling like this works. Would be glad for independent verification; seems like a problem that others have encountered and talked about here, with mixed results. While StaMPS seems like a useful tool, I’d rather not have to dust off my command-line processing memories in the hope of making it work for this purpose. Hopefully this trick of using the second Read node for the master is what one needs for back-geocoding multiple secondaries to a single master using batch mode.