Dear forum,

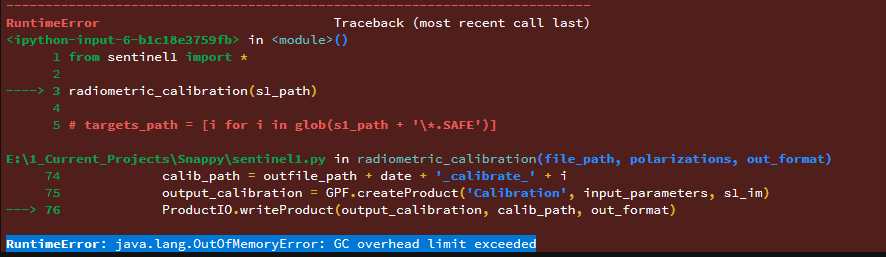

I have been working with Snap python and sentinel 1 a little bit and I find myself in the next situation. I need to process a bulk of SAR images and despite using the java.max.memory (80% of the RAM available on a processing unit) at some point the processing crashes.

RuntimeError: java.lang.OutOfMemoryError: GC overhead limit exceeded

Is there any way to free some RAM memory while processing from a Snappy point of view? In my case I have +40 SLC SAR images and I already exceed the RAM memory in the radiometric calibration (first step of the workflow).

By the way, does anyone knows how does GC module of python works?

Thanks a lot for your time and patience,

Joan