There are many sources of errors in remote sensing data processing. For significant displacements, such as those caused by seismic events, we can detect clearly recognizable fringes and estimate event epicenters and amplitudes. However, for small movements, we rely on various types of timeseries and spatial distribution analyses to estimate the movements and their probabilities. When StaMPS returns only a few pixels, it means that the most accurate estimation is possible for them (depending on your PS selection), but it does not guarantee that the results are always accurate.

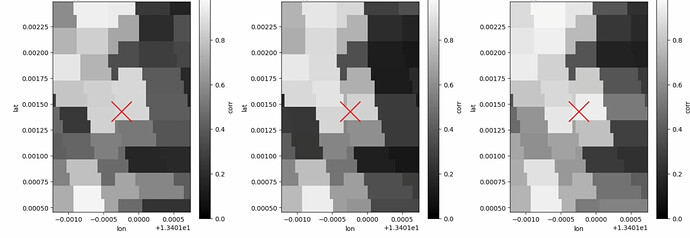

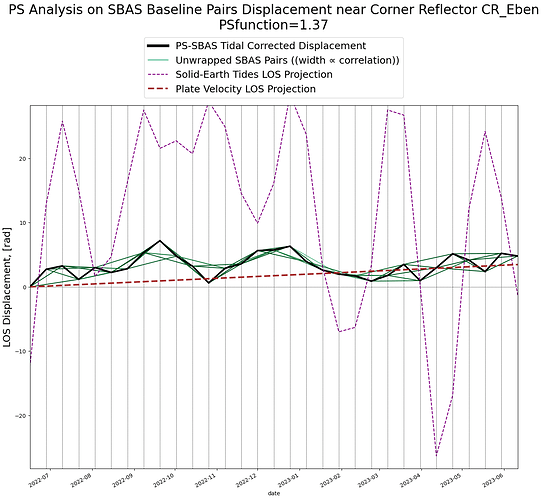

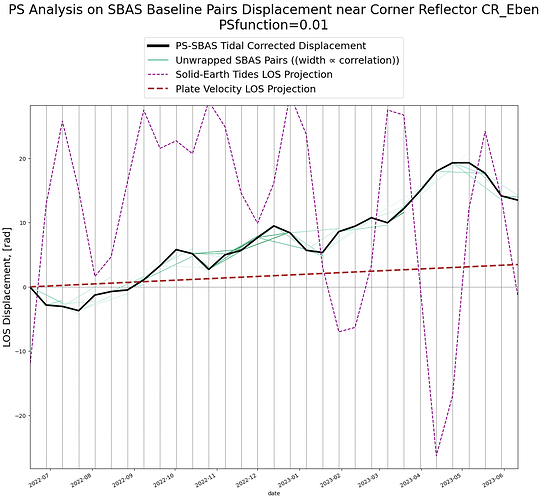

Let’s compare the Line-of-Sight (LOS) displacements in radians for two pixels. One pixel is calculated for a known corner reflector from PyGMTSAR examples, while the other is a random pixel with low stability. In this comparison, we use the Persistent Scatterer (PS) function, where higher values indicate better stability, following GMTSAR approach (the code is hidden in GMTSAR sources and is not easy accessible). This is in contrast to the more widely used Amplitude Dispersion Index (ADI), where lower values are considered better. (If you want to investigate ADI further, we can also calculate ADI in PyGMTSAR). By the way, we normalize the average amplitudes of Sentinel-1 scenes for calculations.

For the PS pixel, all the phase pairs are consistent, and there are no outliers. It means the numerical solution is accurate. However, for the randomly selected low-stability pixel, we observe phase pair inconsistencies, which can lead to inaccuracies in the unwrapped phase and displacements.

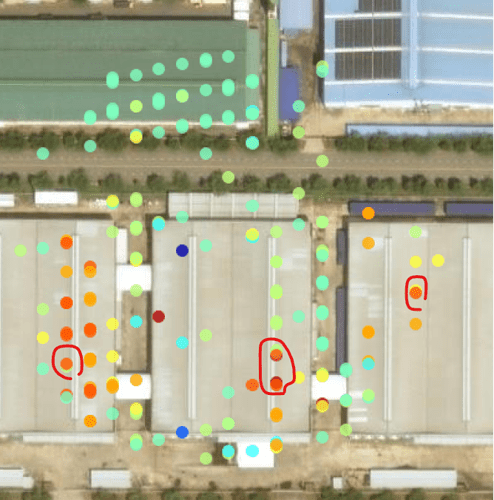

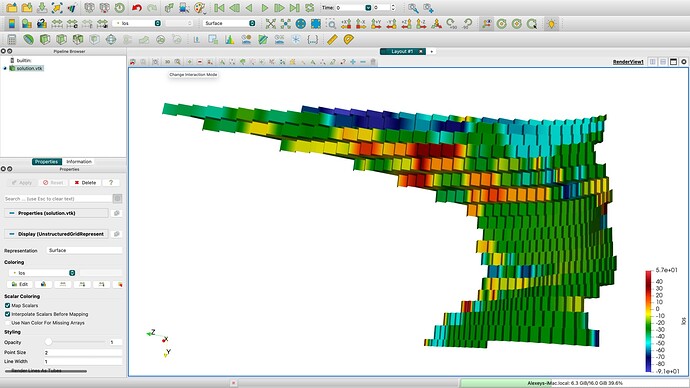

StaMPS cannot process SBAS pairs and has no ability to fix the inconsistencies so you should select the most stable pixels for the analysis. Technically, we can analyze almost any pixels, excluding extremely low-stability ones, to provide better coverage:

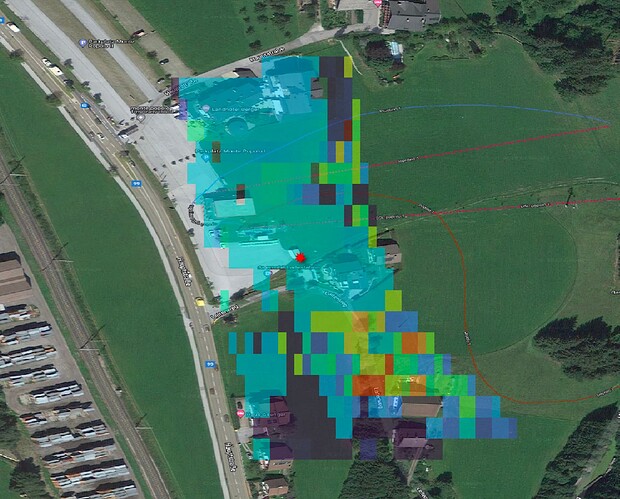

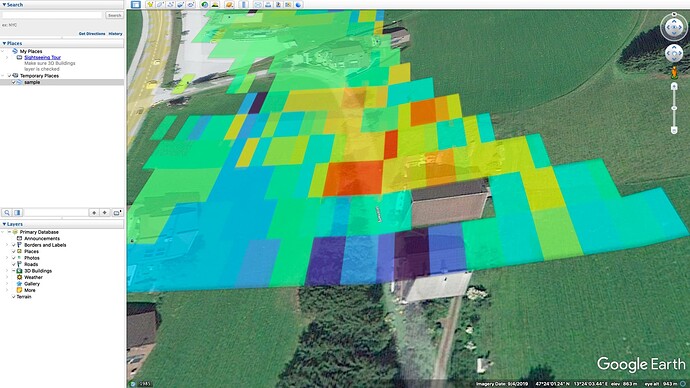

Here, we observe stable areas around the corner reflector, as well as on well-reflecting building roofs and roads. In contrast, grass-covered areas exhibit high displacements, leading to lower expected result accuracy. We can still validate the unstable pixels (those with low coherence, as determined during SBAS pair calculations) using 2D SNAPHU unwrapping and other methods (like STL decompose, as mentioned before). However, these validation processes are beyond the scope of the StaMPS method.