Hello. This is the first time I use Sentinel Toolbox and Sentinel data.

I’m trying to get an RGB image at 10m resolution of a city, and I get a very bad result.

It looks like too slow resolution and the city blanked close to white color.

Maybe I’m doing something wrong, so I would like to know if I need to make a correction to the image, or maybe I unknowingly are not using the 10m data but 15m or 30m?

I’m interested on the city of Villa Mercedes, San Luis province, Argentina.

(here is a kmz file, if you want to know city límit.kmz (700 Bytes) ).

I downloaded this 6 Gb file, because is the most recent cloud-free:

S2A_OPER_PRD_MSIL1C_PDMC_20160608T044243_R010_V20160607T143134_20160607T143134

The documentation I found online, as I understand, says that it contains data from Sentinel 2, all bands including blue, red and green, at 10m resolution.

I suspect that I made a mistake and used 15 m, or downloaded the wrong file

To generate the RGB image, I opened SNAP Desktop, and since it does not opens the zip file, then I uncompressed the file, and opened the xml file in the subdirectory

S2A_OPER_PRD_MSIL1C_PDMC_20160608T044243_R010_V20160607T143134_20160607T143134 .SAFE\GRANULE\S2A_OPER_MSI_L1C_TL_MTI__20160607T204534_A005012_T20HLG_N02.02

I got this message “Multiple readers are available…”

-So, I choose “10m resolution”, expecting the Toolbox to read 10m resolution data.

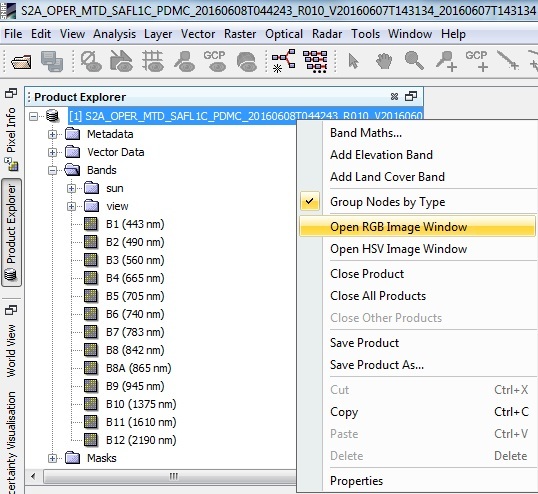

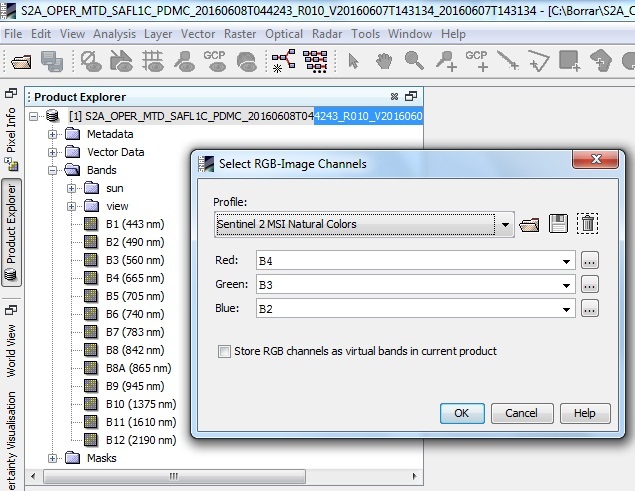

-From the Product explorer, I right-clicked on the packet, and choose “Open RGB image window”, and I left the default RGB channels, B2 for blue, B3 for green, and B4 for Red. because I had read that those are the correct ones.

The problem is that it doesn’t looks like a 10m resolution, but lower, and the city is too white so details are all lost.

here is a comparison of the result, with another image with same resolution, from a different satellite, which is the kind of result I expected.

¿maybe I should open the data with a different method?

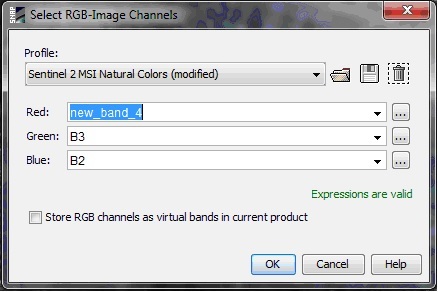

¿Maybe I should do some previous preprocessing/calibration/adjustment?