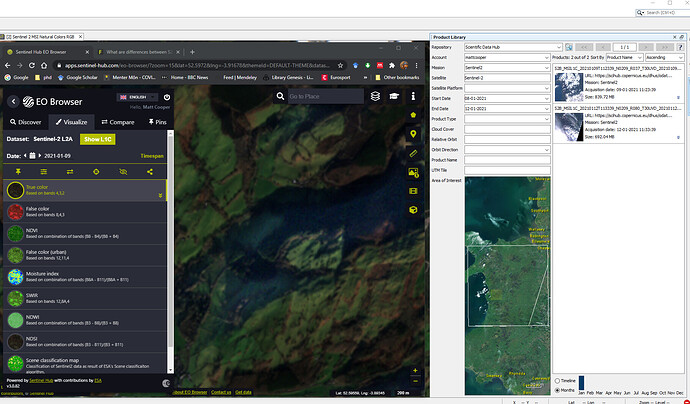

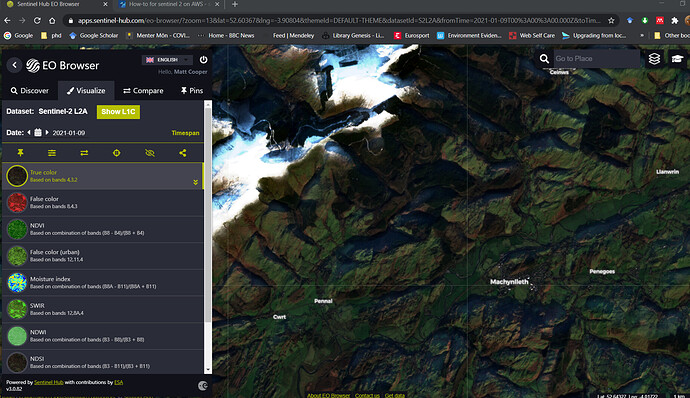

I’m using the EO browser to find suitable images of my AOI but am not able to find the corresponding image in the SNAP product library.

I’ve attached a screenshot which illustrates this.

Am I doing something wrong, or is the image not uploaded into the product library yet?

Not sure how that would help, my AOI is much larger than the area I’m actually studying, and the EO browser shows an image of my AOI, but i cant find it on the product library. (BTW the AOI you used is miles away from mine!)

I am not sure how EO Browser works.

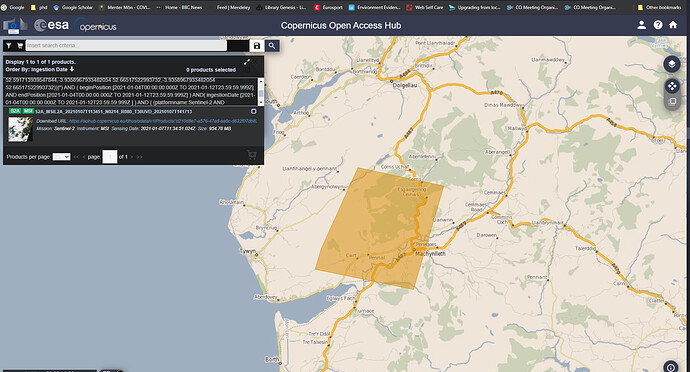

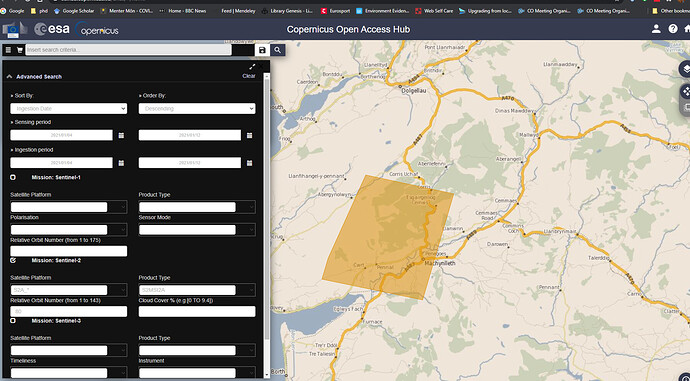

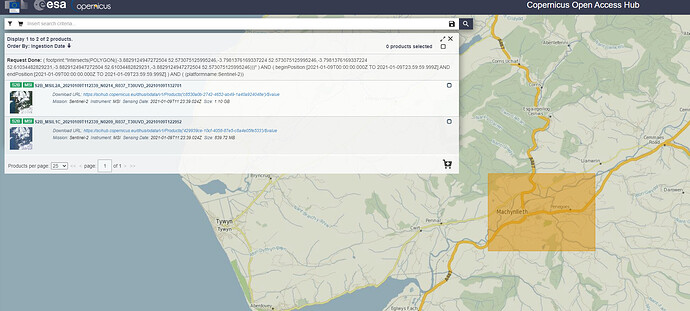

I use Copernicus Open Access Hub (https://scihub.copernicus.eu/dhus/#/home)

Can you see there, for example, the product name covering that area? It will make things easier.

No, I’ve searched on the open access hub, and that image isnt there!

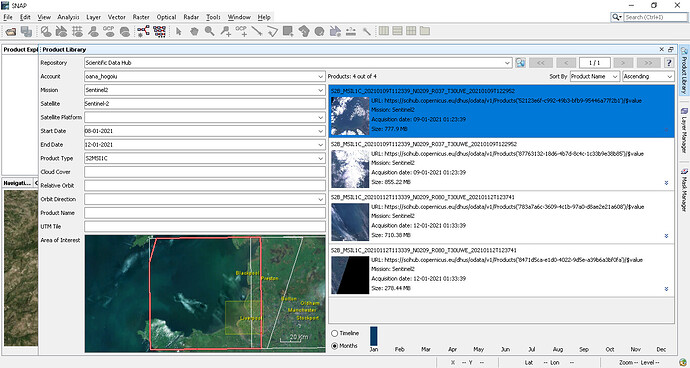

I searched from 04/01 to 12/10 search profile here:

but, as i said, the EO browser clearly shows an image for 09/01. obviously this is a good cloud free image, so i could really use it!

Hello Matt,

I found your products. The problem of your search was that you have also limited the ingestion date.

Matt, in Product Library you should use the ProductType filter to see either the L1C, either the L2A. You cannot see both in the same time. L1C it’s the default, even if you unselect it. (I noticed now in your screenshot)

thankyou @marpet, i was looking for the S2A image, which is on orbit 80, not the S2B which is on orbit 37. I think that is where the error is

@oana_hogoiu @marpet

thanks for your help with this, although i’m still not 100% sure about the results!

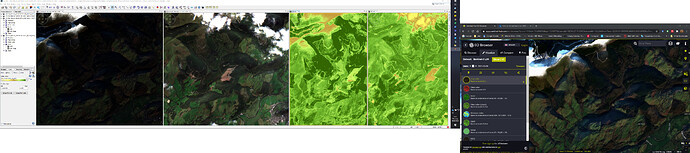

I’ve now got the scene i wanted, (S2B_MSIL2A_20210109), but it seems very dark compared with both S2A_MSIL2A_20200919, (which i know is from sept 2020) but also the image shown on the EO browser, (which deosn’t say whether it’s S2A or S2B)

I’ve added a screen shot of all three images below

one thing I don’t understand is the NDVI values. In the SNAP portion of the screenshot the NDVI image with the highest values is the left hand one, that is the S2B January image.

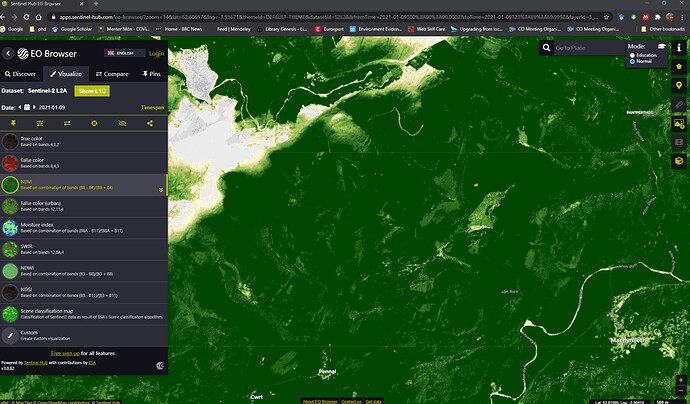

Also , when i set the EO Browser to NDVI on the january image, the picture is very different!:

are there any specialists in NDVI that read this forum?

Perhaps you want to ask about that in the S2TBX category… this may get hidden in here.

Please note that like I tried to explain, some people take Sentinel-2 and transform it when they move it to other locations and platforms. Also the view of the data will depend on Level (1 vs 2) and any other correction that may have been added.

Someone following S2 may have the knowledge about these differences, but my guess is that the EO Browser is either doing some sort of extra radiometric correction or perhaps showing a different than what you believe.

@Jan, I believe you are linked to the S2 MPC… any feedback that you can quickly offer here. Thanks.

Hi @mattcooper @cristianoLopes

Yes; I’m linked to the S2 Mission Performance Centre. We monitor the output of the S2 Mission and investigate anomalies and support validation of the processors. As such, I cannot comment on the representation of imagery in Sentinel Hub; as Cristiano says, there may be some unknown aspect to the portrayal of the data in the GUI. @mattcooper you may want to ask on their forum https://forum.sentinel-hub.com/.

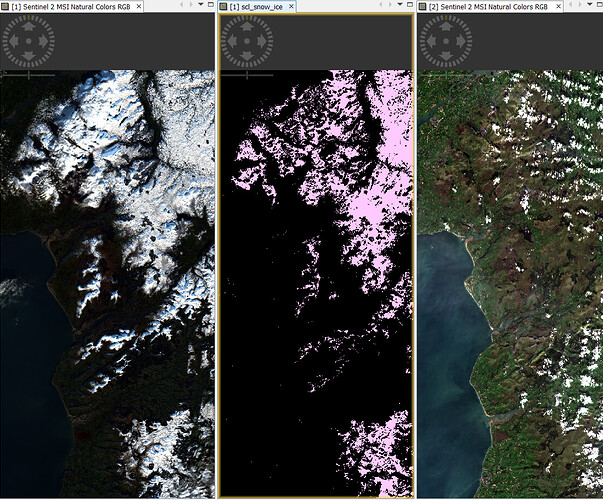

The reason for the darkness in the L2A image from the 9th of January 2021 is due to the presence of snow in the image. The high percentage of brightness from the snow renders everything else dark around it. This can be seen in a side-by-side comparison of both acquistions in SNAP:

…with the Snow/Ice Scene Classification Layer (SCL) opened in between them. It must be stressed that this is NOT a quality issue, but a consequence of rendering the final image in SNAP. It can be ameliorated by performing a ‘stretch’ using the Color Manipulation sliders:

Just as an aside, in order to keep the User Community informed of the behaviour of the instrument, the S2 MPC produce a Monthly Data Quality Report on aspects of the L1C and L2A data acquired by S2A and S2B. They are held in the Sentinel Online User Guide Document Library at https://sentinel.esa.int/web/sentinel/user-guides/sentinel-2-msi/document-library

Cheers

Jan

S2 MPC Operations Manager

To make the RGB image creation constant and independent from the the containing data you can have a look at my post here:

[Update] I just added an entry to our FAQ:

Why are RGB images differently colorised and not comparable - Collection of FAQs

@Jan thankyou for that reply, it explains the difference perfectly. Will the increased reflectance of the snow also affect the band 8 valres, and hence the NDVI processor in SNAP?

@marpet Thanks for your reply, using the profile you linked to certainly gives a better view of the scene!

Unfortunately it has generated another problem which I dont understand!

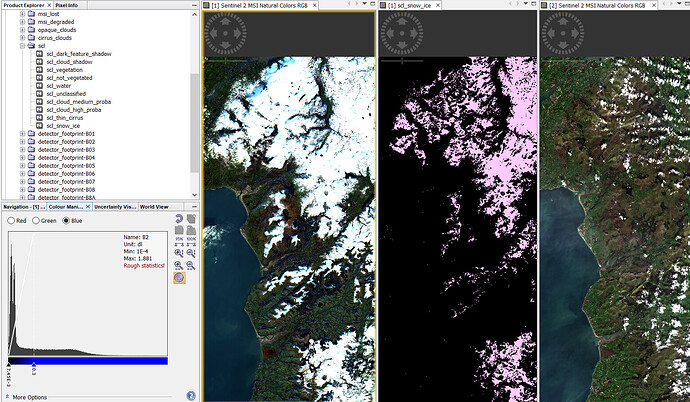

I created a subsey of the S2B image and opened it with the natural colours profile, and then opened it with the profile you provided.

when both are opened together, (As well as the original full tile image), and with the ‘synchronise view across image windows’ toggle selected, it appears that the two subsets are in different places! see screenshot:

The same thing occurs with other sub sets, Does using a different colour profile affect the geolocation in any way?

The view synchronisation is a known problem.

In some scenarios it is not working well.

For example, as described in this issue: [SNAP-734] View synchronisation is not working properly with a product in lat-long WGS84 and other in UTM

Your problem is not the same, but related.

For time being, just use the cursor synchronisation. This works more often. And manually zoom to roughly the same scale.

this is only a matter of color scaling, so which brightness is applied to visualize each pixel value. Changes in the color manipulation tab (and their automated presets) do not affect the actual reflectance values.

Your screenshot gives me screen-envy

@mengdahl this one has the laptop too

To expand on the brightness issue (besides the histogram stretching that SNAP does and is quite annoying in RGB images IIRC as compared to e.g. QGIS), they will never look “pretty” on a screen, because:

- reflectance values are linear, while the computer displays have an approximately exponential response (see sRGB)

- the Sentinel-2 dynamic range is around 12-14 bits, while computer displays are usually 8-bit; reducing the bit depth while maintaining a good contrast is a relatively hard problem

Generally, without gamma correction (not to mention tone mapping), these images will look too dark on a screen. This has no impact on your NDVI processing, as @ABraun has already said.

AFAIK, EO Browser does some magic to make their true-color composites look nicer.

Even the examples there aren’t necessarily “beautiful” because they rely on global (with the same parameters for every pixel) tone mapping operators. To get both good shadow and good highlight detail you need a local operator, which incidentally is how the human retina and brain work.

Hello @mattcooper

Yes.

The NDVI is a Band ratio that uses B04 (visible red) and B08 (VNIR):

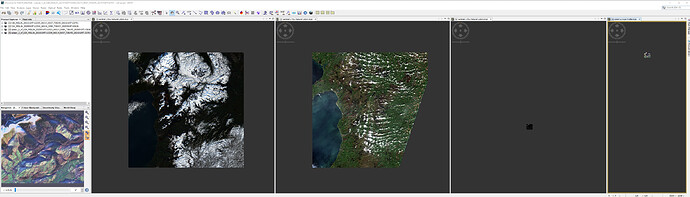

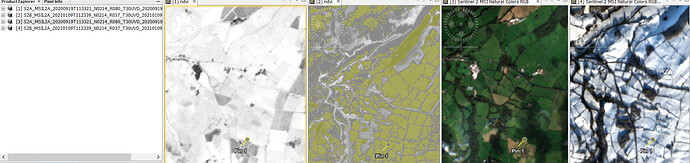

Thus, any significant changes in B08 will impact the final result. This can be seen in a comparison of the two acquisitions:

In the image, the two LH images are NDVI run in SNAP. The RH are the RGB. You can see that the NDVI image of the 20210109 image has the SNAP flag “NDVI value is too low” ON. This low value of NDVI is not flagged in the 20200919 image.

This can be corroborated by extracting a pin sample from the 20210109 image and comparing it with the same pin in the 20200919 image in Excel:

| Name | Lon | Lat | ndvi |

|---|---|---|---|

| S2A_MSIL2A_20200919T113321_N0214_R080_T30UVD_20200919T142636_ndvi | -3.579447489 | 53.16870849 | 0.6491547 |

| S2B_MSIL2A_20210109T112339_N0214_R037_T30UVD_20210109T132701_ndvi | -3.579447489 | 53.16870849 | 0.007868922 |

Cheers

Jan