There are several approaches - depends on your application.

If you want to classifiy the image based on both S1 and S2, you can directly digitize training areas on the stack. The Random Forest classifier, for example, then uses information of both sensors.

If you really want to merge the images you can apply a PCA which generates new layers based on information of both sensors.

There are also various other fusion techniques, feel free to research and compare them.

Sir

Actually i will be fuse S1 and S2 and RF classification will be used in SNAP. I am little bit confuse that after collocation process the PCA should be used or something else? Please clarify me plzzzz.

Come on… How can I know what you are aiming at? We are just trying to help, but there are so many fusion techniques, there is no standard procedure and there are many things which have to be considered. So how can we advise you if you obviously don’t know what you want yourself.

If you want sound advice, give a bit more information by yourself. What is your data, what do you want to do with it, which method did you chose…

Just heading from one step to the next makes it so difficult for us to understand what is going on at your side.

Dear sir

I have sentinel 1 A and 2B data. I want to do LU/LC classification in SNAP using RF classification. So i want to use fusion technique foe more accurate classification. If more clarification is required please let me know.

In this case you can simply train your samples on the stack which contains both S1 and S2 data and then apply the Random Forest classifier.

You will find descriptions here:

Once S1 images are terrain corrected, re-projected in UTM (as S2) and stacked together with S2, I guess the geo-location accuracy of overlapping S1/S2 pixels in the stack is defined by the orthorectification algorithm.

Which level/order of accuracy can we expect in terms of pixel alignment?

From my understanding, it should be sub-pixel order. Any comments on that?

M

hello ABraun, I was wondering if it is important to prepeocess the sentinel-2 data because I tried classifying it and the result wasn’t good…in fact it didn’t work.

I don’t know whether it is because I didn’t preprocess it or maybe I might have left something out but I followed this your discussion with dini_ramanda and did exactly as he/she did.

hello dini_ramanda. how did you go about your classification of the sentinel-2 dataset. I am getting something strange but the sentinel-1 works perfect

Radiometric correction only slightly changes the pixel values, it is not mandatory for classification (unless you want to compare scenes of different dates).

But there were cases where classification only worked after reprojection.

hello, i am doing S1 and S2 fusion, i did calibration, speckle filtering, terrain correction of s1 data from Snap as S1 preprocessing. i did nothing to S2 data,

then for fusion i need to do co-registration of both data on same reference coordinate scale. I need step how to do coregistration in detail with coordinate reference help.

and what will be future steps to do fusion,. i need help,

if you’ve terrain corrected the S1 products, just use Collocate or CreateStack on the S1 and S2 products.

hello , thanks for your suggestion.

Now i did collocate from SNAP raster geometric.

is collocate, create stack and coregistration is same thing?

now , what next i do for fusion of SAR and optical(PCA, IHS, Brovey etc), and is it possible from SNAP or i have to export into ERDAS.

kindly guide me , i am new remote sensing

If you are new to the field, take your time to study and compare some opinions and approaches. There is no standard way of fusion, so it also depends on what you want to do with the data after the fusion. I listed some references to fusion approaches here: Fusion of S-1A and S-2A data

It might be suitable to convert the SAR data to db (also right-click on the SAR bands for this) because this creates a more suitable distribution of backscatter values. Explanations are given here: dB or DN for image processing? and here Classification Sentinel-1 problems with MaxVer

Once they are in your stack you can create an RGB image by right-clicking on the product and selct “Open RGB image window”. This lets you allow to place colors on different bands and shows you their different information content.

Some fusion methods also can be done in the band maths tool (right click > band maths)

PCA is available under Raster > Image Analysis > Principal Component Analysis.

Maybe also an unsupervised clustering is an option to you (Raster > Classification > Unsupervised Classification)

HI everyone, can someone please explain, is there a difference between stacking images and fusing them. I see some recommend that image fusion be done through transformation techniques like PCA. I also want to use S1 and S2 as input variables in RF classification.

I applied all the s2 & s1 preprocessing steps you have mentioned above, in SNAP I . I converted my SAR files to decibels and saved them as bands. But I don’t know how to mosaic and how to create a subset of an image as the SNAP subsetting tool does not allow use of ROI or Shp files. My study area has an irregular shape. So I performed image mosaicking and raster clipping in QGIS. I then used the “align rasters” function to resample them to 20m resolution and to register them to a common projection WGS84 Zone 35.

I then created an 18 band stack consisting of 10 selected multi-temporal S2 bands, and 8 from SAR spanning over Four months period (VH & VV (4 months) = 8). Now I am trying to extract reflectance values into a Data Frame in R-studio, but the process is running forever, sometimes the system just crashes and restarts the computer. I want to perform a classification after extracting the reflectance/backscatter values (remember my file is an 18 band stack).

Please Help, what am I doing wrong, and Please do not forget to answer my 1st Question. Thank you in advance.

hello , can we we do object detection such as road, building, bridges etc from fused data.

if yes , then how and share me some related material to doing this

the only object detection currently supported by SNAP is the ship detection module based on the CFAR algorithm (examples)

For more complex objects, such as buildings or roads, you will have to pursue an semantic and object-oriented approach, for example in eCognition.

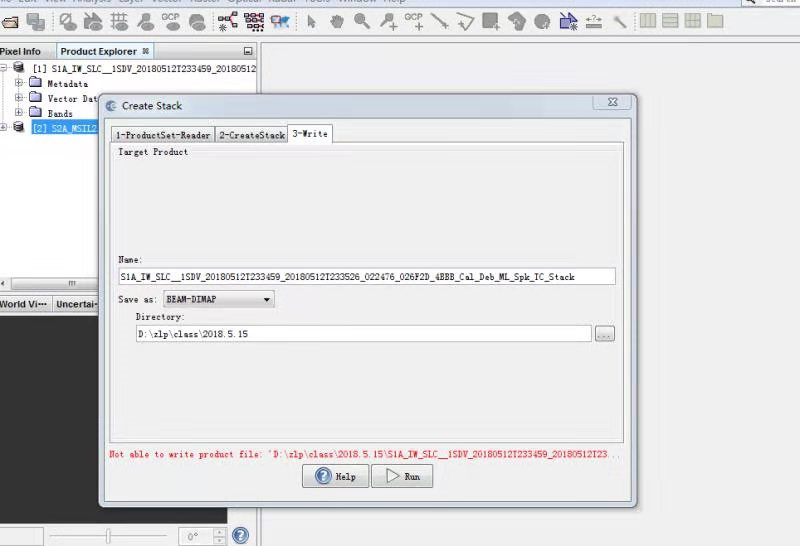

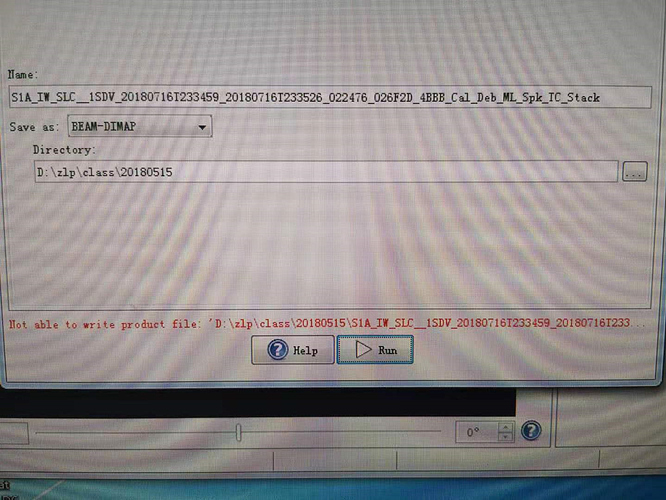

when i use the satck tool to get the stack with the s1A and S2A,following error is being shown:ple help me .

try to write it to a folder with no . in the name (e.g. D:\zip\class\20180515)

maybe your D:\ drive is full?