Dear SNAP community,

as I couldn’t find a single exhaustive documentation place about SNAP/gpt performance settings I’m posting it here. I’d be very grateful if the developers or some power user could answer. Maybe this also helps to improve SNAP documentation.

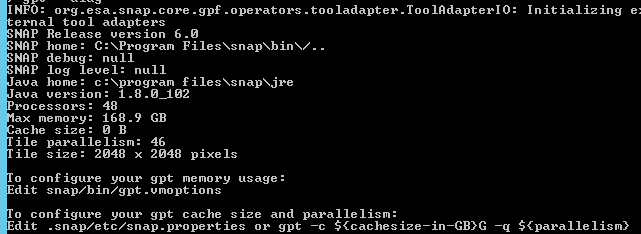

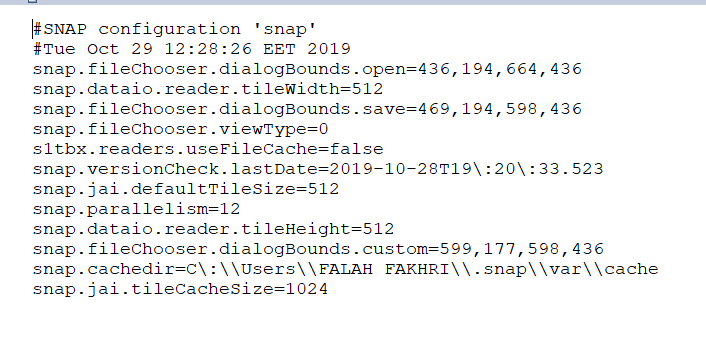

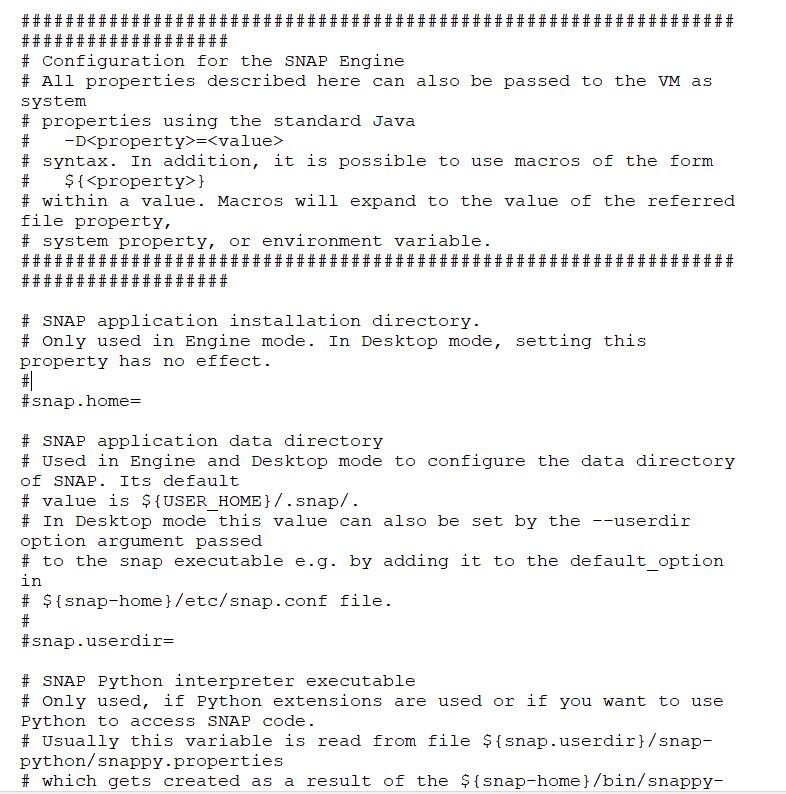

In general there are two ways to set the gpt parameters:

a) settings in gpt.vmoptions file

b) flags when calling the gpt program, like: ‘gpt -e -c 40G -q 12 -J-Xms2G -J-Xmx50G’

Right? While the flags will always override the settings in the gpt.vmoptions file?

The meaning of the parameters:

-Xms - the initially allocated memory when starting a SNAP/gpt instance, quite straightforward to understand, no question.

-Xmx - maximum available memory for the SNAP/gpt instance, again quite straightforward to understand, no question.

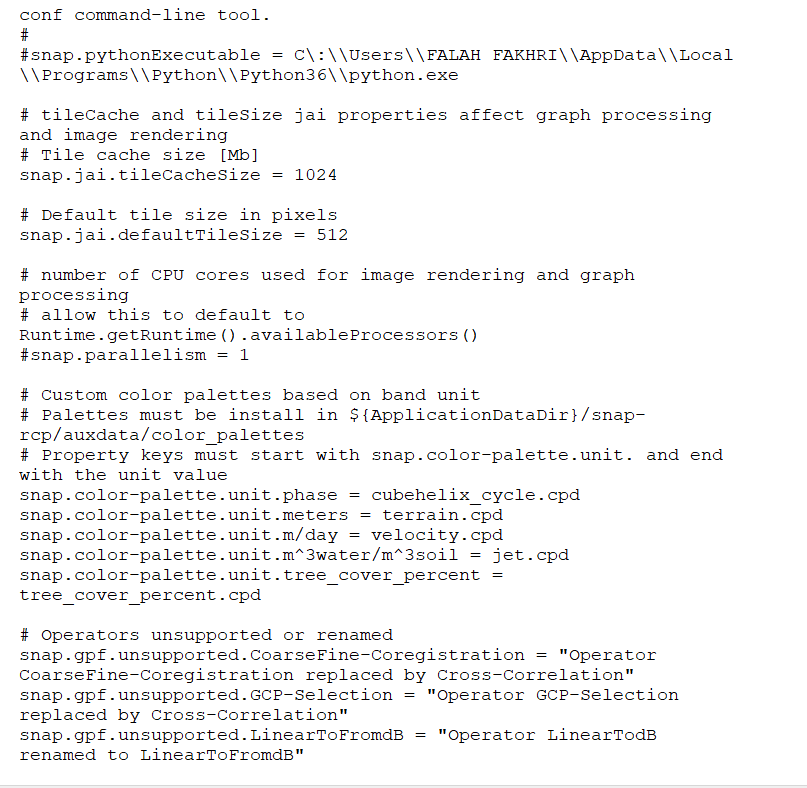

“-q Sets the maximum parallelism used for the computation, i.e. the maximum number of parallel (native) threads. The default parallelism is ‘12’.” Again straightforward to understand.

But…

“-c Sets the tile cache size in bytes. Value can be suffixed with ‘K’, ‘M’ and ‘G’. Must be less than maximum available heap space. If equal to or less than zero, tile caching will be completely disabled. The default tile cache size is ‘1,024M’.”

This is already a bit tricky. What is the recommended option, how it is meant to be used respect to the available system memory? As close as possible to the -Xmx setting or something different? Can someone explain the tile caching mechanism in SNAP (how it is implemented), if possible then with drawings?

“-x Clears the internal tile cache after writing a complete row of tiles to the target product file. This option may be useful if you run into memory problems.”

Is it then safe to keep the -x option always switched on? Or it can have some negative effects?

“-XX:+AggressiveOpts” <- What does setting this parameter actually do?

Is there something else the user needs to know about setting the SNAP performance parameters?

Kaupo

P.S. Using SNAP 5 on Linux with 64 GB of RAM for Sentinel-1 IW SLC processing, but I’m more interested in general principles than just the parameters that would work for me. I’d bet the whole community would benefit from improved documentation.